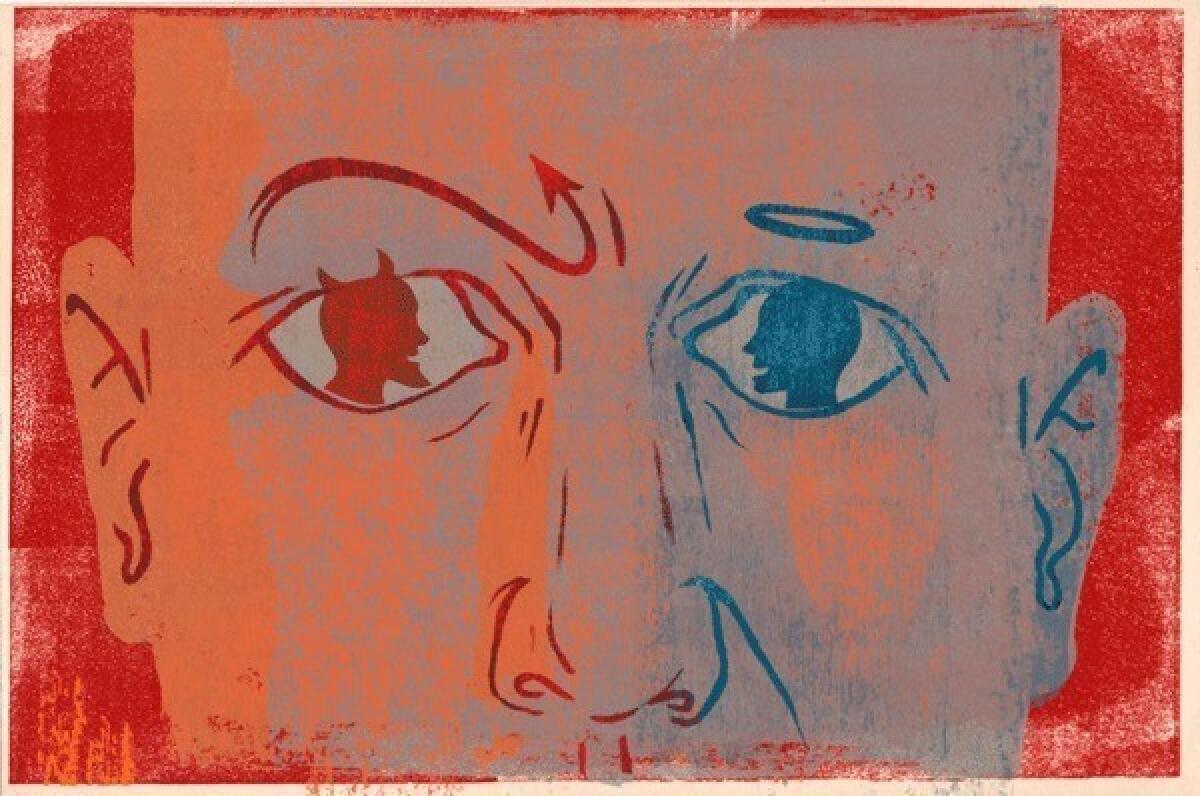

Human -- for better or worse

Are people, by nature, kind or rotten? This question has kept philosophers, theologians, social scientists and writers busy for millenniums.

A vote for our basic rottenness comes from scholars such as Steven Pinker of Harvard, who has documented how it is the regulating forces of society, rather than human nature, that have brought a decline in human violence over the centuries. A vote for our basic decency comes, surprisingly, from work by primatologists such as Frans de Waal of Emory University, who have observed that other primates display the basics of altruism, reciprocity, empathy and a sense of justice. Those virtues have a long legacy that precedes humans.

It’s obviously hard to answer the question of what primordial humans were like, since we can’t go back in time and study them. But another way of getting at this issue is to study people who must act in a primordial manner, having to make instant gut decisions. Do we tend to become more or less noble than usual when we must act on rapid intuition?

Light is shed on this in a recent study by David Rand and colleagues at Harvard, published in the prestigious journal Science, and the research is tragically relevant. The authors recruited volunteers to play one of those economic games in which individuals in a group are each given some hypothetical money; each person must decide whether to be cooperative and benefit the entire group, or to act selfishly and receive greater individual gain. A key part of the experiment was that the scientists altered how much time subjects had to decide whether to cooperate. And that made a difference. When people had to make a rapid decision based on their gut, levels of cooperation rose; give them time to reflect on the wisdom of their actions, and the opposite occurred.

Testing a new set of volunteers, the authors also manipulated how much respect subjects had for intuitive decision-making. Just before participating in the economics game, people had to either write a paragraph about a time that it had paid off to make a decision based on intuition rather than reflection, or a paragraph about a time when reflection turned out to be the best way to go. Bias people toward valuing quick, intuitive decision-making, and they acted more for the common good in the subsequent game. In contrast, bias people in the reflective direction, and “looking out for No. 1” comes more to the forefront — something the authors termed “calculated greed.”

Naturally, not everyone behaved identically in response to these experimental manipulations. Where might differences come from? The authors asked participants a simple question: On a scale of 1 to 10, how much can you trust people whom you interact with in your daily life? And the more trusting subjects were, the more quick, intuitive thinking pushed them in the direction of cooperation. If you view the world as a benevolent place, your rapid-fire, reflexive response in a situation is more likely to spread that benevolence further.

Neuroscience has generated a trendy new subfield called “neuroeconomics,” which examines how the brain makes economic decisions. The field’s punch line is that we are not remotely the gleaming, logical machines of rationality that most economists proclaim; instead, we make decisions amid the swirl of our best and worst emotions. Neuroeconomics, in turn, has spawned the sub-subfield of “the neuroscience of moral judgment.” Scientists such as Jonathan Haidt of New York University have shown that we frequently feel rather than think our way to moral judgments; in general, the more affective parts of our brains generate quick, intuitive, moral decisions (“I can’t tell you why, but that is wrong, wrong, wrong”), while the more cognitive parts play catch-up milliseconds to years later to come up with logical rationales for our gut intuitions. Thus, it is obviously important to understand what leads intuitive decisions in the direction of acting for the common good.

What’s the relevance of this research to current events? Every parent can tell you that sharing and cooperating are definitely acquired traits for children. Now, kids don’t learn to act for the common good through moral reasoning — 5-year-olds don’t think, “My goodness, if I act with self-interest at this juncture, it will decrease the likelihood of future reciprocal altruism, thereby depressing levels of social capital in my community.” Kids don’t learn to care for the well-being of others by thinking — brain development isn’t quite there yet. They do so by feeling — imagine how that person feels, imagine how you would feel if that were done to you. Barney and Mister Rogers, rather than Immanuel Kant and Soren Kierkegaard. A world in which goodness is an act of intuition, rather than of reasoning. As well as a world in which cynicism and distrust are not yet commonplace.

This must do interesting things to an adult who spends lots of time around young kids, this world of trust and intuition-based decency. Say, a teacher, or school psychologist or principal in a suburban Connecticut elementary school. And then it happens, a circumstance that no human can anticipate, a moment of horrific menace, where there is only a reflexive instant to decide whose well-being comes first. What happens then?

What happens? Anne Marie Murphy. Dawn Hochsprung. Lauren Rousseau. Mary Sherlach. Rachel D’Avino. Victoria Soto. That’s what happens. And the rest of us can only stand in awe at the heartbreaking beauty of what they did.

Robert M. Sapolsky is a professor of neuroscience at Stanford University and the author of “A Primate’s Memoir,” among other books. He is a contributing writer to Opinion.

More to Read

A cure for the common opinion

Get thought-provoking perspectives with our weekly newsletter.

You may occasionally receive promotional content from the Los Angeles Times.