Column: The artificial intelligence field is infected with hype. Here’s how not to get duped

The star of the show at Tesla’s annual “AI Day” (for “artificial intelligence”) on Sept. 30 was a humanoid robot introduced by Tesla Chief Executive Elon Musk as “Optimus.”

The robot could walk, if gingerly, and perform a few repetitive mechanical tasks such as waving its arms and wielding a watering can over plant boxes. The demo was greeted enthusiastically by the several hundred engineers in the audience, many of whom hoped to land a job with Tesla.

“This means a future of abundance,” Musk proclaimed from the stage. “A future where there is no poverty. ... It really is a fundamental transformation of civilization as we know it.”

We still don’t have a learning paradigm that allows machines to learn how the world works, like human and many non-human babies do.

— AI researcher Yann LeCun

Robotics experts watching remotely were less impressed. “Not mind-blowing” was the sober judgment of Christian Hubicki of Florida State University.

Some AI experts were even less charitable. “The event was quite the dud,” Ben Shneiderman of the University of Maryland told me. Among other shortcomings, Musk failed to articulate a coherent use case for the robot — that is, what would it do?

Get the latest from Michael Hiltzik

Commentary on economics and more from a Pulitzer Prize winner.

You may occasionally receive promotional content from the Los Angeles Times.

To Shneiderman and others in the AI field, the Tesla demo embodied some of the worst qualities of AI hype; its reduction to humanoid characters, its exorbitant promises, its promotion by self-interested entrepreneurs and its suggestion that AI systems or devices can function autonomously, without human guidance, to achieve results that outmatch human capacities.

“When news articles uncritically repeat PR statements, overuse images of robots, attribute agency to AI tools, or downplay their limitations, they mislead and misinform readers about the potential and limitations of AI,” Sayash Kapoor and Arvind Narayanan wrote in a checklist of AI reporting pitfalls posted online the very day of the Tesla demo.

“When we talk about AI,” Kapoor says, “we tend to say things like ‘AI is doing X — artificial intelligence is grading your homework,’ for instance. We don’t talk about any other technology this way — we don’t say, ‘the truck is driving on the road’ or ‘a telescope is looking at a star.’ It’s illuminating to think about why we consider AI to be different from other tools. In reality, it’s just another tool for doing a task.”

That is not how AI is commonly portrayed in the media or, indeed, in announcements by researchers and firms engaged in the field. There, the systems are described as having learned to read, to grade papers or to diagnose diseases at least as well as, or even better than, humans.

Crypto was always reliant on the ‘bigger fool’ theory, and it has found its marks.

Kapoor believes that the reason some researchers may try to hide the human ingenuity behind their AI systems is that it’s easier to attract investors and publicity with claims of AI breakthroughs — in the same way that “dot-com” was a marketing draw around the year 2000 or “crypto” is today.

What is typically left out of much AI reporting is that the machines’ successes apply in only limited cases, or that the evidence of their accomplishments is dubious. Some years ago, the education world was rocked by a study purporting to show that machine- and human-generated grades of a selection of student essays were similar.

The claim was challenged by researchers who questioned its methodology and results, but not before headlines appeared in national newspapers such as: “Essay-Grading Software Offers Professors a Break.” One of the study’s leading critics, Les Perelman of MIT, subsequently built a system he dubbed the Basic Automatic B.S. Essay Language Generator, or Babel, with which he demonstrated that machine grading couldn’t tell the difference between gibberish and cogent writing.

“The emperor has no clothes,” Perelman told the Chronicle of Higher Education at the time. “OK, maybe in 200 years the emperor will get clothes. ... But right now, the emperor doesn’t.”

Just over a year ago, CRISPR pioneer Jennifer Doudna of UC Berkeley talked with me about the prospects that the gene-editing technology she had helped develop might be used in violation of ethical boundaries.

A more recent claim was that AI systems “may be as effective as medical specialists at diagnosing disease,” as a CNN article asserted in 2019. The diagnostic system in question, according to the article, employed “algorithms, big data, and computing power to emulate human intelligence.”

Those are buzzwords that promoted the false impression that the system actually did “emulate human intelligence,” Kapoor observed. Nor did the article make clear that the AI system’s purported success was seen in only a very narrow range of diseases.

AI hype is not only a hazard to laypersons’ understanding of the field but poses the danger of undermining the field itself. One key to human-machine interaction is trust, but if people begin to see a field having overpromised and underdelivered, the route to public acceptance will only grow longer.

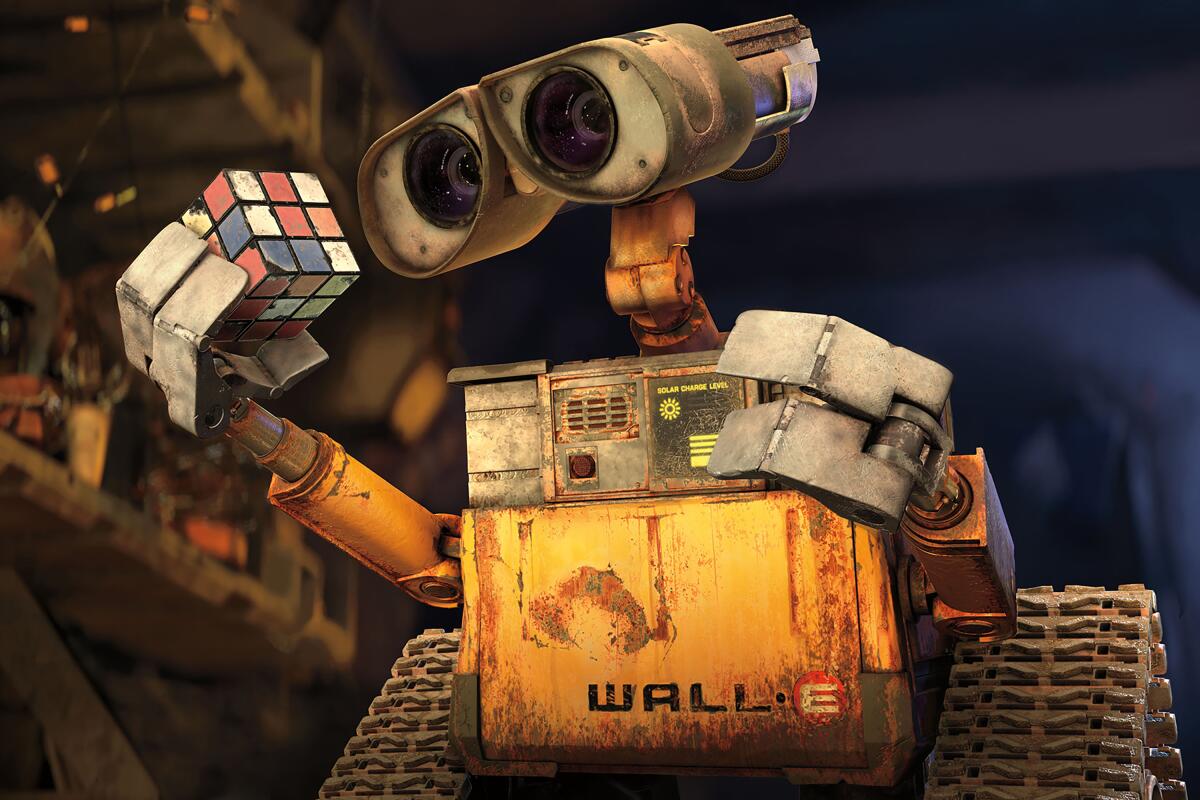

Oversimplification of achievements in artificial intelligence evokes scenarios familiar from science fiction: futurescapes in which machines take over the world, reducing humans to enslaved drones or leaving them with nothing to do but laze around.

A persistent fear is that AI-powered automation, supposedly cheaper and more efficient than humans, will put millions of people out of work. This concern was triggered in part by a 2013 Oxford University paper estimating that “future computerization” placed 47% of U.S. employment at risk.

Shneiderman rejected this forecast in his book “Human Centered AI,” published in January. “Automation eliminates certain jobs, as it has ... from at least the time when Gutenberg’s printing presses put scribes out of work,” he wrote. “However, automation usually lowers costs and increases quality.... The expanded production, broader distribution channels, and novel products lead to increased employment.”

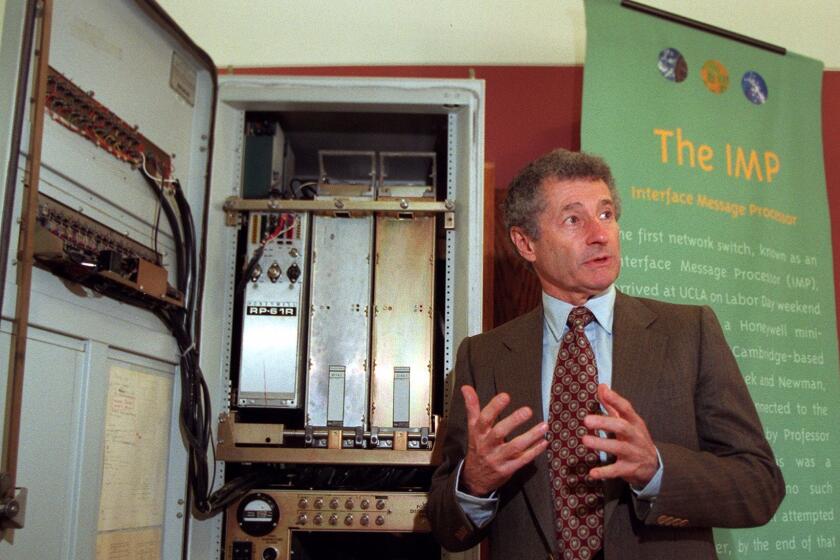

Since it’s so fashionable these days to question whether government can do anything right – whether it’s regulating banks, bolstering the economy or overseeing healthcare – it’s worth noting that we’re about to celebrate the 40th anniversary of one of the most important federal initiatives of our time.

Technological innovations may render older occupations obsolete, according to a 2020 MIT report on the future of work, but also “bring new occupations to life, generate demands for new forms of expertise, and create opportunities for rewarding work.”

A common feature of AI hype is the drawing of a straight line from an existing accomplishment to a limitless future in which all the problems in the way of further advancement are magically solved, and therefore success in reaching “human-level AI” is “just around the corner.”

Yet “we still don’t have a learning paradigm that allows machines to learn how the world works, like human and many non-human babies do,” Yann LeCun, chief AI scientist at Meta Platforms (formerly Facebook) and a professor of computer science at NYU, observed recently on Facebook. “The solution is not just around the corner. We have a number of obstacles to clear, and we don’t know how.”

So how can readers and consumers avoid getting duped by AI hype?

Who really benefits from putting high-tech gadgets in classrooms?

Beware of the “sleight of hand that asks readers to believe that something that takes the form of a human artifact is equivalent to that artifact,” counsels Emily Bender, a computational linguistics expert at the University of Washington. That includes claims that AI systems have written nonfiction, composed software or produced sophisticated legal documents.

The system may have replicated those forms, but it doesn’t have access to the multitude of facts needed for nonfiction or the specifications that make a software program work or a document legally valid.

Among the 18 pitfalls in AI reporting cited by Kapoor and Narayanan are the anthropomorphizing of AI tools through images of humanoid robots (including, sadly, the illustration accompanying this article) and descriptions that utilize human-like intellectual qualities such as “learning” or “seeing” — these tend to be simulations of human behavior, far from the real thing.

Readers should beware of phrases such as “the magic of AI” or references to “superhuman” qualities, which “implies that an AI tool is doing something remarkable,” they write. “It hides how mundane the tasks are.”

Shneiderman advises reporters and editors to take care to “clarify human initiative and control. ... Instead of suggesting that computers take actions on their own initiative, clarify that humans program the computers to take these actions.”

It’s also important to be aware of the source of any exaggerated claims for AI. “When an article only or primarily has quotes from company spokespeople or researchers who built an AI tool,” Kapoor and Narayanan advise, “it is likely to be over-optimistic about the potential benefits of the tool.”

The best defense is healthy skepticism. Artificial intelligence has progressed over recent decades, but it is still in its infancy, and claims for its applications in the modern world, much less into the future, are inescapably incomplete.

To put it another way, no one knows where AI is heading. It’s theoretically possible that, as Musk claimed, humanoid robots may eventually bring about “a fundamental transformation of civilization as we know it.” But no one really knows when or if that utopia will arrive. Until then, the road will be pockmarked by hype.

As Bender advised readers of an especially breathless article about a supposed AI advance: “Resist the urge to be impressed.”

More to Read

Get the latest from Michael Hiltzik

Commentary on economics and more from a Pulitzer Prize winner.

You may occasionally receive promotional content from the Los Angeles Times.